AI literacy now has a national standard. Flintolabs already runs on it.

In February 2026, the U.S. Department of Labor published something that should be on every parent's and educator's radar: a national AI Literacy Framework. It's the first federal attempt to define what AI literacy actually means, what it should include, and how it should be taught, across the entire American workforce and education system.

Why does this matter for your student? Because the DOL is making it clear that AI literacy is no longer optional. It's foundational. Every worker, every student, every job seeker will need these skills to succeed in an AI-driven economy. The framework isn't a suggestion buried in a policy memo. It's a direct signal from the federal government that the workforce is changing, and preparation needs to start now.

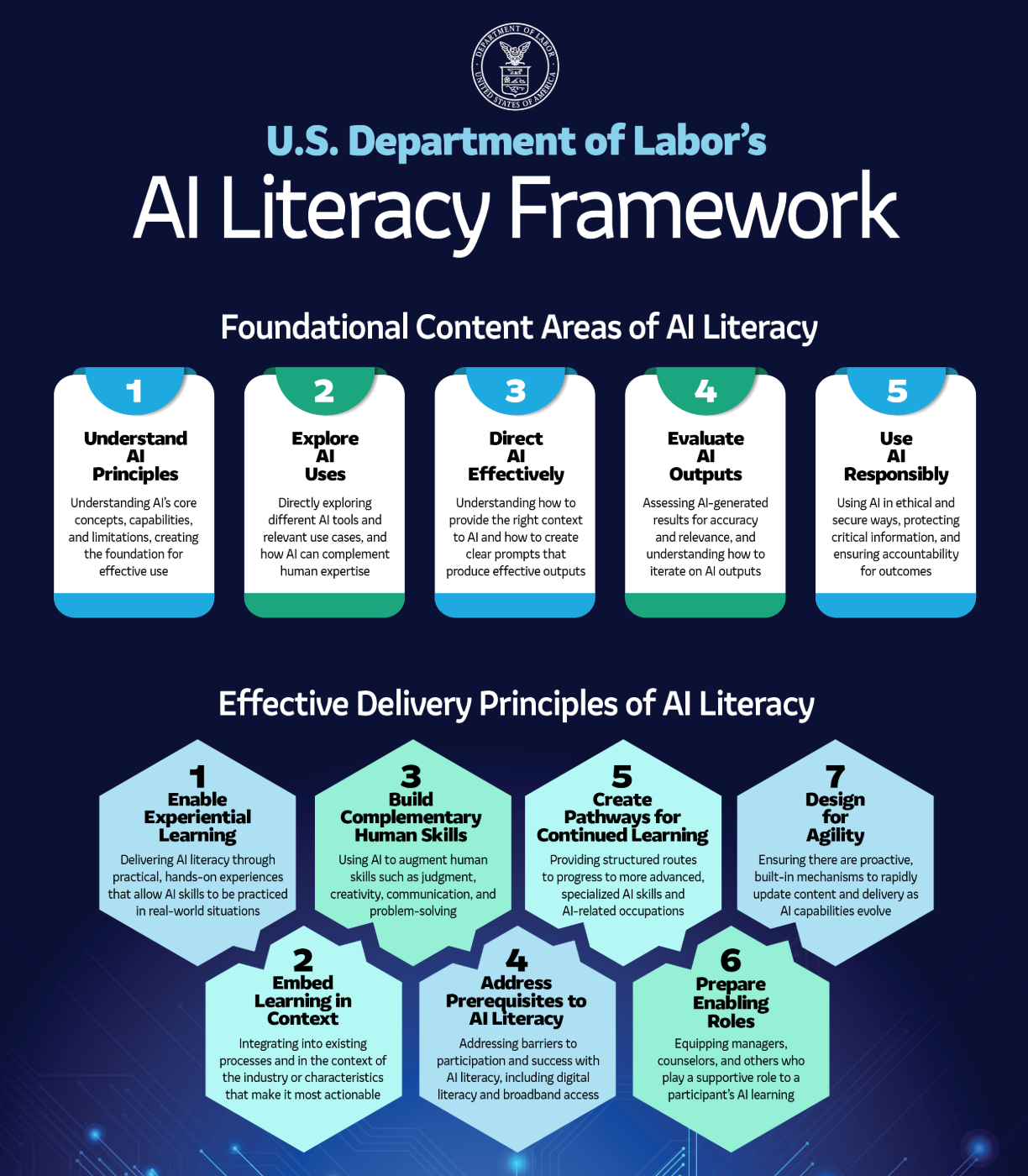

The framework outlines five foundational content areas that define what AI literacy looks like, and seven delivery principles that define how it should be taught effectively. When we read through it, we realized something: Flintolabs has been building its program around these exact principles since day one.

Here's how.

The five foundational content areas

The DOL framework defines five areas that any AI literacy program should cover. Let's walk through each one and how it maps to what Flintolabs students experience.

1. Understand AI principles

The framework says workers need to understand what AI is and how it works, not at a PhD level, but enough to have the vocabulary and mental models to use AI tools confidently. This includes understanding that AI generates outputs based on patterns in data, that it can hallucinate confidently wrong answers, and that every AI system reflects human design decisions.

At Flintolabs, this is where every cohort begins. Before students touch a single AI tool, they learn the foundations: how AI models work, what generative AI actually does under the hood, why the same prompt can produce different results, and where AI falls short. We've written about this before, including a deep dive on AI's limitations based on research from leading AI scientists. Our students don't just learn to use AI. They learn to understand it, which changes how they interact with it for everything that follows.

2. Explore AI uses

The framework emphasizes that learners should explore how AI is being applied in real-world settings, from productivity tools to creative assistance to decision-support systems. The goal is building familiarity and judgment so workers recognize when to apply AI and where human input remains essential.

This is core to how Flintolabs structures its immersive labs. Students don't study AI use cases from a textbook. They explore them by building. They build chatbots and learn natural language processing. They create face filters and learn computer vision. They build augmented reality apps and understand how AI layers onto the physical world. Each lab is designed around a real AI capability, and students walk away understanding not just what AI can do, but when it's the right tool and when it isn't.

3. Direct AI effectively

The DOL framework highlights that most AI tools depend heavily on the quality of input they receive. Users need to learn how to frame context, structure prompts clearly, supply relevant data, and iterate on outputs. This doesn't require coding skills, but it does require a specific way of thinking.

At Flintolabs, students learn to direct AI by actually directing it, repeatedly, across different projects. They learn prompting techniques not as an abstract exercise but as part of building real applications. When a chatbot gives a bad response, they debug the prompt. When an AI-generated output doesn't match the intent, they iterate. We recently introduced dedicated Claude sessions into our curriculum specifically because the tools are evolving so fast, and students need to practice directing the latest AI systems, not yesterday's.

4. Evaluate AI outputs

The framework stresses that AI outputs always require human review. Workers need to verify accuracy, assess completeness, spot logical errors, and apply their own expertise to determine whether an output is actually useful.

This is one of the most important things our students learn, and it comes naturally through the building process. When you're creating a working app, you can't accept the first output AI gives you. You test it. You compare it to what you expected. You find the gaps. Our students develop this evaluation muscle through constant iteration. They test different approaches, compare results, and refine their work based on what they observe. By the time they've completed a project, they have real experience distinguishing between AI output that looks good and output that actually works.

5. Use AI responsibly

The framework's final content area focuses on responsible use: protecting sensitive information, following policies around AI use, avoiding misuse, managing context-specific risks, and maintaining accountability for outcomes. AI doesn't make decisions for you; you're still responsible for what you produce with it.

Responsible use is woven throughout the Flintolabs experience. Students learn about data privacy, about the ethical boundaries of AI-generated content, and about what it means to be accountable for the products they build. We also run dedicated sessions on deepfakes, where students learn how AI-generated images, audio, and video are created, how to identify them, and why they pose real risks to trust and information integrity. These sessions go beyond awareness. Students explore how the industry can adopt safeguards like watermarking AI-generated content, and they discuss how they, as the next generation of builders and citizens, can advocate for these protections. When students build apps that interact with real users, they confront responsible use questions directly: What data should this app collect? What should it not collect? What happens if the AI gets something wrong in a context where it matters? How do you ensure that AI-generated content is transparent about its origins? These aren't hypothetical discussions. They're design decisions students make as they build.

The seven delivery principles

The DOL framework doesn't just say what to teach. It says how to teach it effectively. Here's how Flintolabs aligns with each delivery principle.

1. Enable experiential learning

The framework is unambiguous on this point: AI literacy is most effectively developed through hands-on use, not reading about AI in the abstract. Workers build confidence by using AI in real-world contexts to solve actual tasks.

This is the foundation Flintolabs was built on. Every student builds apps from the first session. There are no weeks of theory before you get to touch a tool. You learn by doing, by building, by breaking things and fixing them. Our immersive labs are structured around real-world task integration, live feedback and iteration, and progressive difficulty, all of which the DOL framework specifically calls out as effective experiential learning approaches.

2. Embed learning in context

The framework emphasizes that AI literacy becomes more impactful when it's relevant to what learners actually do. Contextualized learning reduces friction and reinforces how AI fits into real workflows.

This is exactly why Flintolabs includes two months of Startup School in its program. After students build foundational AI skills, they apply those skills in the context of building a real startup. AI gets embedded into every aspect of the process: idea validation, market research, revenue analysis, feedback collection, pitch development. Students aren't learning AI in isolation. They're learning AI as part of solving a real problem they care about, which is how the learning sticks.

3. Build complementary human skills

The framework makes a critical point: AI tools are amplifiers of human input, and their effectiveness depends on the skills, knowledge, and judgment of the people using them. Programs should build critical thinking, creativity, communication, and domain expertise alongside AI skills.

At Flintolabs, we've always believed that AI fluency without human judgment is incomplete. Our students don't just learn to generate outputs. They learn to think critically about which outputs to use. They present their work, defend their decisions, and communicate their ideas clearly, skills that matter as much as the technical ability to prompt an AI model. Startup School takes this further: students pitch to judges, collect and incorporate feedback, and collaborate with peers, all of which build the complementary human skills the DOL framework describes.

4. Address prerequisites to AI literacy

The framework acknowledges that AI literacy can't happen if learners lack foundational digital skills. Programs should proactively identify and address these barriers.

Flintolabs addresses this head-on. Before students jump into AI, they learn the non-AI skills required to build real products: how apps are structured, what frontend and backend mean, how to use tools like GitHub for version control, and how to navigate development environments. We don't assume students come in knowing these things. We teach them as part of the program because you can't build with AI if you don't understand the building blocks that AI sits on top of.

5. Create pathways for continued learning

The framework says foundational literacy is only the starting point. Programs should create visible routes for learners to build on what they've learned, pursue specialized training, or transition into AI-related work.

Flintolabs is designed with continuation built in. After the six-month program, students can continue building on their skills through ongoing projects and internship opportunities. The framework specifically calls out encouraging builder and entrepreneurship pathways as a key delivery approach, and that's exactly what Startup School is: a structured path from AI literacy to AI-powered entrepreneurship. Students leave with a portfolio of real projects, the skills to keep building, and pathways to apply what they've learned in real-world settings.

6. Prepare enabling roles

The framework recognizes that AI literacy efforts work better when the people around learners, whether managers, mentors, or parents, are equipped to support the learning process.

Flintolabs takes this seriously by bringing parents along for the journey. We provide regular updates on what students are learning, how they're progressing, and what AI concepts they're working with. This isn't just for transparency. It's so parents can reinforce learning at home, have informed conversations about AI with their kids, and understand how these skills connect to their student's future. When parents understand what their kids are building and why, the entire learning experience becomes stronger.

7. Design for agility

The framework's final principle is perhaps the most important: AI moves fast, and training must be designed with built-in mechanisms for adaptation. Fixed curricula become outdated almost immediately.

This is something we live every day at Flintolabs. When Claude emerged as a powerful new tool for building, we didn't wait for the next curriculum cycle. We introduced Claude-focused sessions directly into the program. When new AI capabilities emerged that changed how students could build apps, we updated our labs to reflect them. Our curriculum is modular by design, and we treat agility not as a nice-to-have but as a core operating principle. The DOL framework validates what we've learned through experience: in AI education, standing still means falling behind.

The bottom line

The DOL's AI Literacy Framework is an important signal. It tells employers, educators, and families that AI literacy is now a national priority, and it provides a clear structure for what effective AI education looks like.

For parents evaluating programs for their students, this framework is a useful benchmark. Ask whether a program covers all five content areas. Ask whether it follows the delivery principles. Ask whether students are actually building things, or just watching someone else do it.

At Flintolabs, we didn't design our program to match a government framework. We designed it to prepare students for the world they're actually walking into. The fact that the DOL framework aligns so closely with what we've been doing is a validation of the approach: hands-on, project-based, contextual, agile, and always focused on building real skills that matter.

If this is the standard the country is setting for AI literacy, your student can start building toward it today.

References

U.S. Department of Labor, Employment and Training Administration. "The U.S. Department of Labor's Artificial Intelligence Literacy Framework." Training and Employment Notice No. 07-25, February 13, 2026, dol.gov/agencies/eta/advisories/ten-07-25.

Comments (0)

No comments yet. Be the first to share your thoughts!